Building my first AI Agent - Part 0: Basics

Updated: March 2026

Why am I building an AI agent?

Let's face it, AI is everywhere now, and as much as I was skeptical the truth is that it has come a long way since 2022. My workplace has been pushing it non-stop on all our tasks. And after realizing that AI would always code faster than me, I decided to give it a go on most of my work tasks.

However, for my personal projects I still go old-school by doing artisanal handmade coding. But as usual, my curiosity wants me to learn how things like Claude, Claude Code, ChatGPT and other agentic software work. I decided to build my own from scratch (but not training my own model).

The project I want to build is an assistant that can do knowledge retrieval, tool calling and memorization. But it goes beyond just answering questions — I want an agent that can anticipate what I need. One that monitors what I'm working on and proactively says "hey, you have some notes that might be relevant here" before I even think to ask.

This will encompass pretty much all the aspects I want to learn: tool calling, agent loop, knowledge retrieval, memory, and proactive context suggestions.

The plan

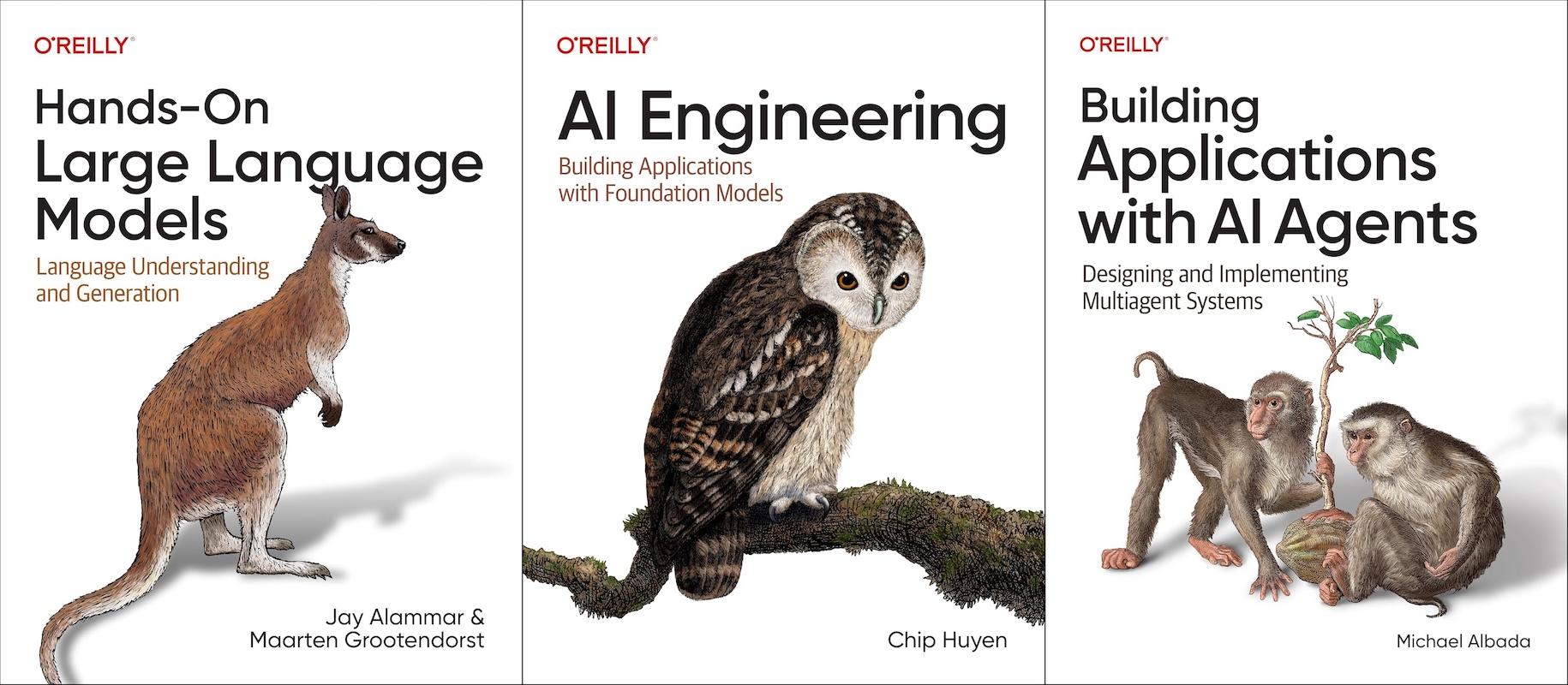

As usual, my all-or-nothing thinking got me to buy a couple books on the subject, the ones I got are:

-

Hands-On Large Language Models: Language Understanding and Generation by Alammar and Grootendorst

-

AI Engineering: Building Applications with Foundation Models by Huyen

-

Building Applications with AI Agents: Designing and Implementing Multiagent Systems by Albada

Since the original version of this post, my understanding of what goes into an agentic system has grown quite a bit. I've refined the original milestones into eight phases that cover the core concepts without overreaching. The goal is to build something real and actually finish it.

Here's the updated roadmap:

- Phase 1: The Chat Relay — Connect to an LLM API, send messages, get responses. The "hello world" of agents.

- Phase 2: Tool Use — Let the LLM call functions that the system executes.

Define a

Tooltrait, build a registry, wire up the execution engine. - Phase 3: The Agent Loop — The big conceptual shift. The LLM can now call tools repeatedly until it has a final answer, not just once.

- Phase 4: RAG — Build a knowledge base the agent can search. Chunk documents, generate embeddings, and do vector similarity search.

- Phase 5: MCP Integration — Connect to external tool servers via the Model Context Protocol. Dynamic tool discovery, JSON-RPC transport, the whole thing.

- Phase 6: Memory — Persistent memory across conversations. Session history, semantic facts, and the challenge of deciding what to remember and what to forget.

- Phase 7: Proactive Context Suggestions — This is the one I'm most excited about. Instead of only answering when asked, the agent monitors the conversation and surfaces relevant knowledge from the knowledge base before you ask for it. Imagine discussing an idea and the agent says "you have some notes related to this — want me to pull them up?"

Each phase builds on the previous one and produces a working, demoable system. The idea is to learn by building incrementally — not by trying to architect everything upfront.

The tools

Since I'm loving using Rust and this is just for my own curiosity, I will keep it simple and just go for a Rust implementation. Immersing myself is how I learn best.

For the LLM, I want to keep things provider-agnostic. The system should work with any LLM API that supports the standard patterns like tool calling. Whether that's Ollama for local models, the Anthropic API, or something else, the architecture shouldn't care. The model is an implementation detail that can be swapped out. Starting with Ollama means it's cheap and anyone can replicate it; supporting cloud APIs means access to more capable models when needed.

As for the agentic loop, I could use something like LangChain or LangGraph, but honestly I think I will learn more if I just go raw with Rust and see where I get. At the end of the day, I want to understand and learn the implementation of a full agentic assistant. I'll use Claude Code for the boilerplate-heavy stuff once I've internalized each pattern, but the core concepts get written by hand.

The project

I like using codenames for my projects, as a kid I loved movies and shows that used them. For this project, I decided to go for Tonalli, which is an Aztec concept around the mind and the soul. I thought this would go nicely with an AI agent.

Next steps

The first thing is to tackle Phase 1: the basic interface to send messages to the LLM. A simple CLI that connects to an LLM API and lets me have a multi-turn conversation. No tools, no memory, no RAG — just the foundation.

From there, I'll work through the phases incrementally, writing about what I learn at each step. The blog posts will force me to consolidate my understanding, and building in the open means the community can pressure-test my design decisions.

What to expect in this series

- Part 1: The Chat Relay — LLM integration and conversation history

- Part 2: Tool Use — function calling and the tool registry

- Part 3: The Agent Loop — chaining tool calls into multi-step reasoning

- Part 4: RAG — knowledge base and vector search

- Part 5: MCP — connecting to external tool servers

- Part 6: Memory — persistence across conversations

- Part 7: Proactive Suggestions — an agent with initiative

Stay tuned for Part 1!